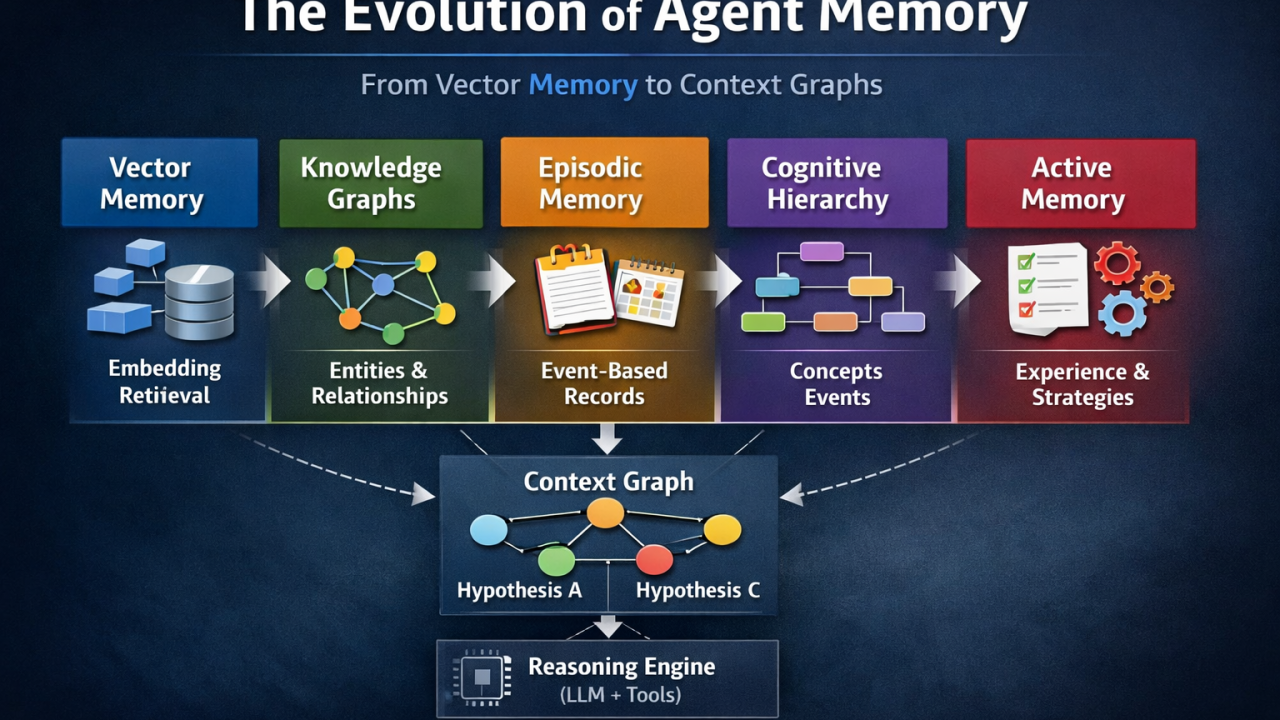

From Vector Memory to Context Graphs: The Evolution of Agent Memory

Pochadri Ariga

CTO & Co-founder · March 4, 2026 · 5 min read

I have been spending some time reading papers and implementations around memory systems for AI agents. One thing became clear very quickly.

Most discussions about agents focus on models, prompts, orchestration frameworks, and so on. But the real constraint seems much simpler: how the system remembers.

Vector memory was the first step

Vector retrieval gave agents the ability to find semantically similar past interactions. You embed a query, search a vector database, and get back the closest matches. This works well for straightforward recall — finding relevant documents, surfacing similar past conversations.

But similarity is not understanding. A vector database can tell you that two things are related. It cannot tell you how they are related, or how that relationship has changed over time.

Then came knowledge graphs and event-based memory

Knowledge graphs added structure. Instead of flat embeddings, you get entities (people, systems, concepts) connected by explicit relationships (works-at, depends-on, caused-by). This lets an agent reason about connections rather than just proximity.

Event-based memory added a temporal dimension — tracking not just what happened, but when, and in what sequence. This matters when you need to understand causality: did the deployment cause the outage, or did the configuration change?

Context graphs: what the agent currently believes

More recently, I am seeing ideas around context graphs — a structured representation of what the agent currently believes about the problem it is solving.

This is a meaningful distinction:

Once you start separating those two layers, agent architectures begin to look very different. The memory layer becomes a long-term store that accumulates institutional knowledge. The context graph becomes a working model — assembled fresh for each problem, drawing from memory but structured around the specific question at hand.

Why this matters for enterprise agents

In enterprise environments, the challenge is not just retrieving relevant information. It is understanding how systems, people, and processes interconnect — and how those connections change over time.

An agent investigating a production issue needs to know that Service A depends on Service B, that Service B was deployed yesterday, that a similar issue occurred three months ago with a different root cause, and that the engineer who resolved it last time is no longer on the team.

Vector retrieval alone cannot assemble this picture. You need structured memory that preserves relationships and temporal context.

The implication

Memory architecture will become a competitive moat for enterprise AI agents. The agents that compound institutional knowledge — tracking how relationships, preferences, and context evolve across quarters, not just conversations — will outperform those that forget everything between sessions.

This is not about better search. It is about building agents that actually learn your business.

Originally published on LinkedIn

More from the BuildWright team

View all posts →